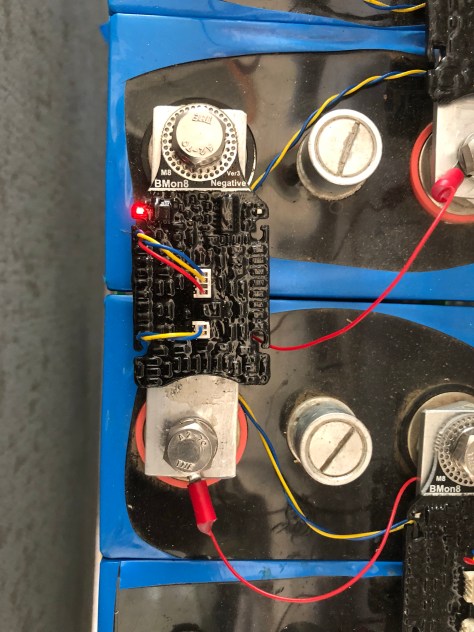

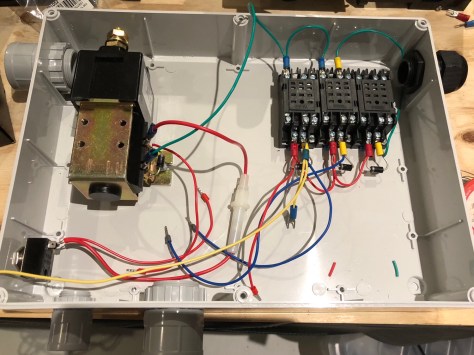

This box of stuff will protect the battery in my home brew 23kwh storage system. Given the failure modes for lithium batteries involve dying permanently and catching fire you want to get this right. This will be able to stop the inverters from draining the battery and if things get really outside the comfort zone it will disconnect the battery completely. I could disconnect the solar panels from the inverters if I needed an absolute override for high voltage but I don’t think that’s going to be a problem.

The Batrium BMS is the brains of the operation.

The big thing on the left is the contactor. It’s designed for forklifts. It’s rated at 48 volts 200 amps DC. It came via AliExpress.

The relay coils are also 48 volt DC although the contacts aren’t really rated for that many volts. I’m not expecting problems because the main contactor draws only two hundred milliamps which isn’t going to sustain much of an arc.

If either relay is closed the contactor will close. The BMS has a pair of FET outputs that connect to ground when enabled. One is programmed as Charge OK and the other as Discharge OK. They can also be set to open and close a bistable contactor but thats not what I have and they scare me.

I designed everything using a sketch tool on my ipad called You Doodle.

Then I started to put it together. Here’s the result so far.

Next task is making the aluminium strap between the contactor and the current shunt. Then I’m ready to bolt it all to the wall.

UPDATE

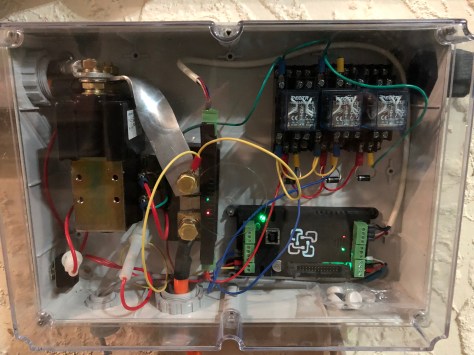

Here it is mounted to the wall:

Note the shunt is operating BACKWARDS. There is a setting in the shunt setup window that tells the WatchMon that the shunt is reporting backwards.

After I reassembled everything I got really wildly fluctuating readings in the tool.

Pulling everything apart I discovered that one of the two brass screws that hold the sensor to the shunt and carry the voltage from the shunt to the sensor was loose. I probably put too much force on the sensor when wrangling everything into place.

One day I may replace that awful ugly aluminium bar. I went to the trouble of twisting it so you can see the LEDs but then I discovered that they are visible through the side of the sensor too! Sadly twisting it made it less than pretty.

Another interesting observation is that the contactor runs very hot. I know the EV community have used various circuits to reduce the average hold current on the contactor but it’s probably easier to put a little fan in the side of the box.